This article was written by Alwayne Powell, the Senior Digital Marketing Manager at 8×8 contact centre and communication platform. You can find them on LinkedIn.

As we embrace the reliability, agility, and innovative potential of the multi-cloud environment, observability in DevOps grows more critical.

Businesses are under escalating pressure to deliver swift continuity, quick fixes, and innovative, high-quality end-user experiences. Alongside streamlined processes and collaborative efficiency, DevOps teams need real-time access to detailed, correlative, context-rich data and analytics.

But within a multi-cloud environment, this grows increasingly difficult to achieve. Complex, distributed IT systems make it harder for us to glean meaningful data insights and resolve issues.

Observability delivers high visibility into dynamic environments. By understanding how observability in DevOps transforms development capabilities, you can maximize the effectiveness of your teams and your data.

Understanding Developer Observability

Observability is defined as the ability to measure the current internal state of a system or application based on the data it outputs. It aggregates complex telemetry data—metrics, logs, and traces—from disparate systems and applications in your business. It then converts them into rich, visual information via customizable dashboards.

This provides developers with deep visibility into complex, disparate systems. Unlike monitoring, which only offers surface-level visibility into system behaviors, observability can tell you more than simply what is happening. It can tell you why it’s happening, illuminating the root cause of an issue.

As such, observability enables DevOps to identify, locate, correlate, and resolve bugs and misconfigurations. So, not only can teams solve issues faster, but they can improve system performance, deployment speeds, and end-user experiences.

The Transformation of Dev Roles

Cloud-nativity has transformed the role and responsibilities of software development teams. And, as a consequence, observability is paramount. Let’s get into it.

Evolving responsibilities of developers in the context of observability

Software bugs are unavoidable. But as the software world matures, so do customer expectations. And, in turn, so do software development responsibilities.

Customers want fast fixes and innovative new features. Developers need to accurately identify and diagnose bugs and misconfigurations to meet these expectations and drive operational efficiency. They also need insight into disparate applications (web, mobile, desktop, etc.) to analyze capabilities correctly.

This enables them to make informed, impactful decisions.

But here’s the problem. Cloud-nativity, serverless, open-source containerization, and other technology developments must be used to fuel accelerated, high-volume deployment. As we scale our technological environments to deliver speedy fixes and high-quality experiences, we risk losing critical production visibility.

Not to mention, the stress of managing and extracting data insights heightens as you accumulate more data.

Observability enables developers to carry out their responsibilities in alignment with demand. So, continuous integration and continuous delivery (CI/CD), improved delivery performance, proactiveness, and innovation can be achieved. Plus, it enables you to significantly reduce tech team burnout and amplify productivity.

Collaboration between developers and operations teams

Not every small business adopts a dedicated “DevOps” team from the get-go. However, it’s critical that development and operations come together in a collaborative environment.

DevOps unites the values, methods, practices, and tools of the two independent teams. This drives them toward a shared goal, mitigates friction points, and improves operational efficiency and productivity.

Observability in DevOps plays a key role in this harmonization. It gives teams a deeper understanding of systems, metrics, and performance, driving collaborative decision-making.

Emphasis on cross-functional skills and knowledge for developers

Collaboration ties in perfectly with employee learning and development. The more collaborative teams are, the more opportunities they have to share knowledge and develop cross-functional skills. For this reason, it’s central to your employee retention strategy.

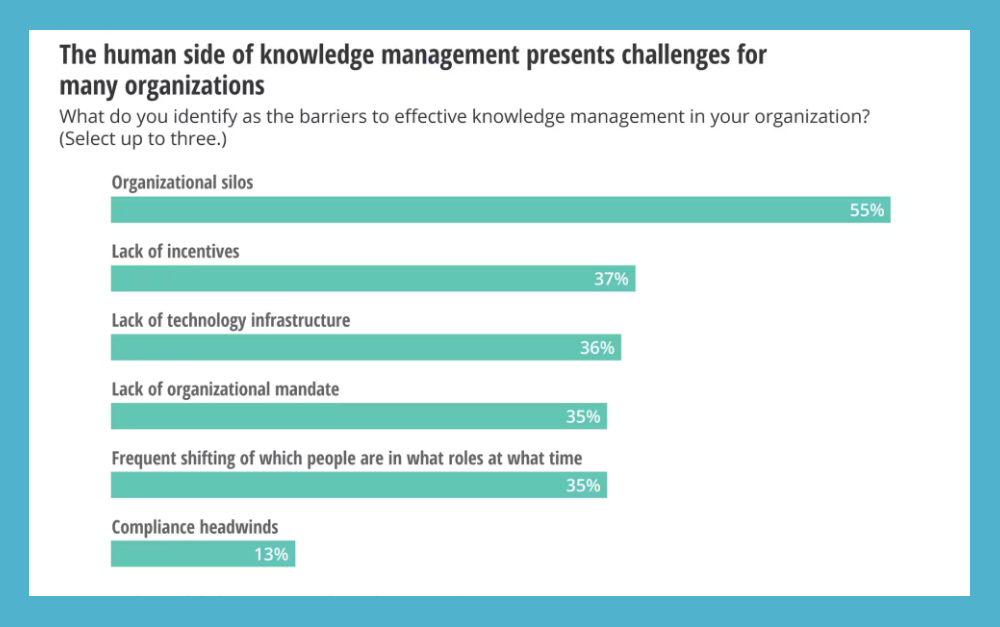

Observability is central to this. When your systems are observable, you prevent informational silos and knowledge hoarding. Both of these issues restrict employee development. Organizational silos and poor technology infrastructure are the biggest obstacles to knowledge sharing for 55% and 38% of businesses respectively.

An observable environment fosters a culture of knowledge-sharing and collaboration, empowering the development of cross-functional skills.

How Developer Observability is Transforming Dev Roles

How does observability impact dev roles and how can you use it to your advantage?

Enhanced troubleshooting and debugging

Developers need to analyze the metrics obtained by monitoring. Then, they must correlate them to the presenting issue. Next, they have to source the location of the error. And this is all before even attempting to implement a fix.

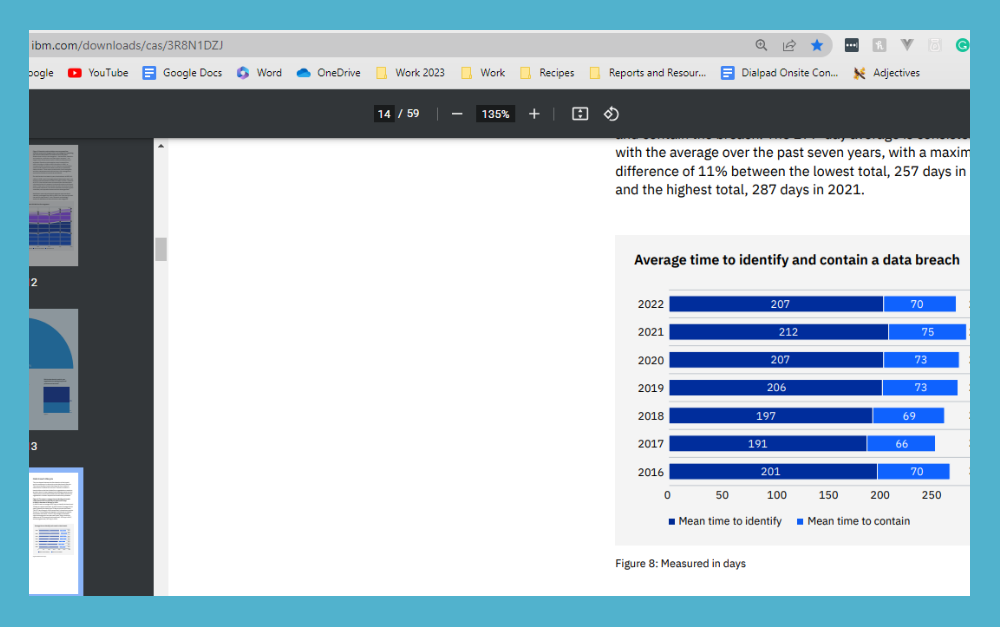

Observability eases the friction points that arise in the manual debugging process. It provides developers with the resources they need to automate and streamline troubleshooting. Supported by advanced capabilities like AI-powered anomaly detection and outage prediction, they can significantly reduce the key performance indicators.

This includes mean time to identify (MTTI) and mean time to resolve (MTTR), leading to a lower change failure rate

Performance optimization and scalability

Performance optimization can’t be achieved without the visibility provided by observability.

To optimize performance, you need in-depth, real-time insight into the behavior and performance of your distributed systems. This includes critical performance metrics for your frontend, backend, and databases. Without this visibility, development teams are forced to make decisions based on assumptions and hunches.

Observability tools deliver essential performance metrics. CPU capacity, response time, peak and average load, uptime, memory usage, error rates—the list goes on. Armed with these insights, you can not only pinpoint and resolve performance issues faster, but drive targeted, performance-optimizing improvements.

Of course, the more visibility you have, the more data you’ll accumulate. And the more resources you’ll need to manage this data. Fortunately, observability tools are inherently scalable. They’re designed to cost-effectively ingest, process, and analyze high-volume data in alignment with business growth.

Utilizing dedicated servers can further enhance scalability by providing dedicated computing resources and eliminating the potential performance impact of shared infrastructure.

Continuous integration and deployment (CI/CD)

Observability in CI/CD grants comprehensive visibility into your CI/CD pipeline. Observability tools can monitor and analyze aggregated log data, enabling you to uncover patterns and bottlenecks within CI/CD pipeline runs.

As a result, you can facilitate a high-efficiency CI/CD environment and accelerate your time to software deployment. Software can travel at speed from code check-in right through to testing and production. And, new features and bug fixes can be delivered continuously in response to the data obtained through observability.

CI/CD tools sometimes come equipped with in-built observability. However, you’ll quickly discover that there’ s no way to push in-built capabilities beyond their limits. To maximize CI/CD pipeline observability, you need observability tools with bottomless data granularity, high-cardinality, and sophisticated labeling.

Collaboration and communication

Did you know that almost 60% of employers report that remote workplaces significantly or moderately increase software developer productivity?

The hybrid workplace is becoming the norm in response to employee demand for increased flexibility. However, remote working can severely limit communication and collaboration efficiency if you don’t have the right tools in place. This is why you need observability software along with other facilitating communication technologies, like a collaborative cloud contact center solution.

Observability software provides your remote tech team with visibility into distributed workplaces and access to real-time data. You can identify points of friction within your internal infrastructure and resolve them to improve workflows. Plus, teams can use the insights gleaned from centralized, comprehensive dashboards to make pivotal, collaborative decisions.

Whether it’s swiftly identifying performance bottlenecks or proactively addressing tool outages, teams can drive improvements from their remote location. And you don’t have to worry about productivity loss either.

Security and compliance

Managed detection and response solution leverage observability data to assess a system’s internal state based on its external outputs. This means that it plays a critical role in cybersecurity and data protection compliance, including ensuring that DMARC policies are correctly implemented to prevent email spoofing and phishing attacks. Specifically, it improves threat detection, response, and prevention.

Observability software can perform event capturing, incident reporting, and data analysis across networks and cloud environments. So, not only can you be immediately alerted to resource vulnerabilities and potential attacks. You can also delve beyond when and where an attack or breach occurred.

Observability can explain why and how the incident occurred and detail the actions that took place. As a result, you can drive security improvements and significantly reduce your incident detection and response.

According to IBM’s most recent “Cost of a Data Breach Report”, it takes approximately 277 days to identify and contain a breach. So, there’s plenty of room for improvement.

Evolving skill sets and learning opportunities

Utilizing observability to its full potential can illuminate employee learning and development (L&D) opportunities. Any weaknesses you uncover through observability can be used to inspire training courses.

For example, imagine your observable systems trace bugs back to poorly-written source code. You could design training courses that focus on code refactoring and eliminating bad coding habits. This not only helps you tackle and resolve an immediate problem—it also upskills your employees.

In the modern business climate, employee upskilling and reskilling aren’t just things you should be doing to improve the quality of your workforce. It’s something you should be doing to retain your workforce.

Research shows that L&D is a core value for the current workforce. So much so, that 69% would consider switching to another company to pursue upskilling opportunities.

By using observability to create targeted L&D opportunities, you can simultaneously close skill gaps, boost employee satisfaction, and skyrocket business productivity.

Another useful strategy for uncovering high-priority L&D opportunities is monitoring calls. Imagine customer agents begin receiving lots of calls from users complaining about the same UX/UI issue. Not only can your DevOps team fix this issue, but you can provide targeted training to prevent it from recurring.

Conclusion

Observability in DevOps is the key to understanding the internal behavior of your systems. When an issue arises, observability ensures that you don’t just know what’s happening in your system, but why it’s happening. Not only does this speed up debugging, but it delivers critical insights that generate the production of preventative measures.

But as we’ve covered above, observability in DevOps isn’t only useful for debugging. It also speeds up software development lifecycles, drives innovation, improves collaboration, and even channels employee learning and development.